Squeezing FPGA Memory

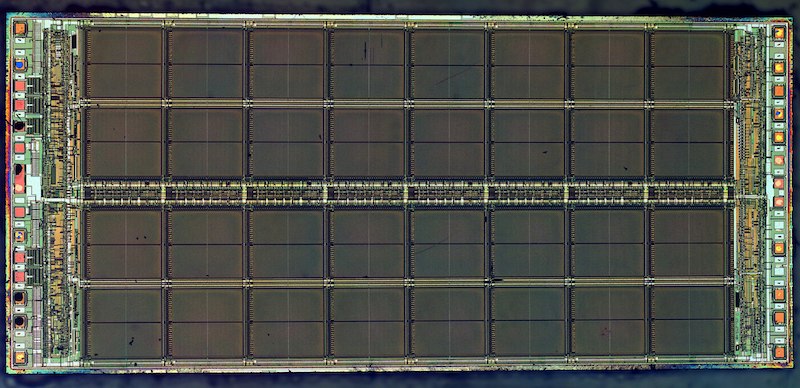

I’m developing an Apple II disk controller that’s based on the UDC disk controller design. The original UDC card had 8K of ROM and 2K of RAM, so it needs 10K of combined memory. The FPGA device I’m using for prototyping, a Lattice MachXO2-1200, has 8K of embedded block RAM and 1.25K of distributed RAM. It also has 8K of “user flash memory”. So will the UDC design fit? It’s close, but I think the answer is no.

At first I thought I could store the ROM data in the FPGA’s UFM section, but that doesn’t look promising. I can store the data there, but compared to embedded block RAM, accessing UFM is inconvenient and probably impractical for live execution of 6502 code. Accessing the UFM requires setting up a Wishbone interface in the FPGA’s Verilog code, starting a memory transaction, and reading out an entire page of flash (16 bytes). It’s also pretty slow. I don’t think it’ll be possible to read an arbitrary byte of UFM and return it to the CPU within ~500 ns, as would be required for directly executing code from it.

OK, so no UFM. Maybe I can store the 8K of ROM data in EBR, using RAM to hold what’s technically ROM? That would work, but it would leave only 1.25K of distributed FPGA RAM remaining to implement the required 2K of RAM for the disk controller. It’s 768 bytes short. No good.

I could switch to a larger FPGA with more memory, or add a separate RAM or ROM chip. But that would increase cost and complexity, and anyway wouldn’t help with my prototype board that’s already built.

Stupid Idea #1

From my analysis of the UDC ROM, I think the upper half of the card’s RAM is only used when communicating with Smartport drives. So I might be able to reduce the RAM from 2K to 1K, and at least I’d be able to test whether 3.5 inch and 5.25 inch drive support works. Using 8K of EBR and 1K of distributed RAM, I’d have a whopping 256 bytes of RAM left. Will it work? I think distributed RAM just means using the FPGA’s logic resources as RAM, so this approach would use 80% of the FPGA’s logic resources and only leave 20% remaining for the actual card functionality, like the IWM model and other logic. It might work, it might not.

Stupid Idea #2

The 8K of ROM isn’t one large chunk. It’s divided into 1K banks that can be mapped into a single 1K region of the computer’s address space. There’s already a small code routine to facilitate the bank switching. What if I could somehow make this routine copy the desired 1K block from UFM to EBR at the moment it’s needed? Then I’d have 8K in UFM, with a 1K cache in EBR, and the 2K of RAM also in EBR.

This would definitely fit, but there would be a delay every time code execution moved to a different 1K ROM page. How long does it take to move 1024 bytes from UFM to EBR? I’m not sure, but I’ll guess it’s tens to hundreds of microseconds. Will that cause problems? Maybe. Will this approach be a pain to implement? Definitely.

Stupid Idea #3

From what I’ve observed of the ROM code, bank 1 contains 5.25 inch functions plus 3.5 inch formatting. Bank 3 is exclusively for 3.5 inch stuff, and bank 7 is exclusively for Smartport drives. Maybe I could temporarily remove some parts of the ROM, in order to make it all fit? Then I might be able to test all the different types of supported disk drives, just not all at the same time.

Stupid Idea #4

Maybe I can modify the ROM code to use 2K of the Apple II’s own RAM instead of 2K of onboard RAM? Then everything would fit in the FPGA. But there must be a good reason the UDC designers didn’t do this. What 2K region of Apple II RAM is safe to use, and wouldn’t get overwritten by running software? I’m not sure.

Stupid Idea #5

Maybe I can modify the prototype board somehow, and graft an extra RAM or ROM chip on there for testing purposes? Maybe I can add a second peripheral card and somehow use its RAM or ROM? Now these ideas are getting crazy.

What’s the Long-Term Solution?

None of these ideas except #2 are workable as a long-term solution, if I eventually move ahead with manufacturing this disk controller card. So what path makes the most sense in the long-term?

Stepping up to the MachXO2-2000 would add about $2 in parts cost, which maybe doesn’t sound like much, but it’s significant. The XO2-2000 has 9.25K EB RAM and 2K distributed RAM, so the design should fit with a small amount of room to spare. That’s surely the least-effort solution.

I could keep the MachXO2-1200 and add a separate 2K RAM chip. The 8K of ROM would fit in the 1200’s EBR. The combined cost might be slightly lower than the MachXO2-2000, but the design and layout would become more complex, and I’m not sure it’s worth it.

I could step down to the MachXO2-640 (2.25K EBR, 640 bytes distributed RAM) and add a separate ROM chip. Total cost would be slightly less than a MachXO2-1200, and I’d also gain lots of extra ROM space for implementing extra features or modes. That would be great. Like adding a separate RAM chip, the extra ROM would make the board design and layout somewhat more complex. But the biggest drawback would be for manufacturing or reprogramming, because both the FPGA and the ROM would need to be programmed separately before the card could be used. Or maybe the FPGA could program the ROM somehow, but it would still be cumbersome and far less attractive than a single-chip programming process.

I never imagined a shortage of just 768 bytes could make such a difference. What an adventure!

Read 14 comments and join the conversation14 Comments so far

Leave a reply. For customer support issues, please use the Customer Support link instead of writing comments.

And I just created a VARCHAR(1024) field for a usually short text below 200 Chars. Now I feel guilty 🙁

Its easy for some dude you don’t know to give advice on the internet, but as engineers, we sometimes get hung up things.

Although adding extra components feels like cheating, every time I did this in the past, at some future point I was glad I did – margin, extra features etc.

Since these are not commodity parts, I’m pretty sure your target customer can tolerate a $1 COGs, $3 price increase…

@Vincent I think you are probably right.

How slow is the UFM? Is it within the realm of using RDY signalling to delay on a cache miss, and only force cache the timing critical sections in EBR?

It will take some work to determine a solid number for effective UFM access speed. It looks like there’s some substantial latency before you can get the first byte out, but after that I think it’s something like 1 byte per clock, with a max clock speed of 16 MHz? The docs aren’t totally clear. At any rate I don’t think literal on-demand caching using RDY would be necessary, because the switching between ROM banks is already handled programmatically by an existing bank-switch routine. Stupid Idea #2 is essentially to patch that. I like your idea of keeping some ROM banks permanently in EBR if they contain timing-critical stuff, since only a couple of banks need to be cacheable to free up enough space.

As for the UFM access speed, there’s some info on page 74 here: Using User Flash Memory and Hardened Control Functions in MachXO2 Devices Reference Guide As best as I can tell, it takes 3 clock cycles to do one Wishbone transaction. It takes five transactions to set up a read, then you can read one byte per additional transaction. Then there’s a final closing transaction. So total time to read a 16-byte UFM page is 3 clocks * (5 setup + 16 bytes + 1 closing) = 66 clocks. The max clock speed isn’t specified there, but elsewhere I saw a number of 16.6 MHz. That puts the time to read one UFM page (16 bytes) at 4 microseconds and time to read 1K bytes at 254 microseconds. I don’t have a 16.6 MHz clock source though. The rest of the design is clocked at 2 MHz, which would multiply all the UFM times by about eight. Or I could try to use the internal clock generator to get 16.6 MHz and implement some clock domain-crossing stuff.

The ICE40UP3K/UP5K FPGAs have 128 kB of on-chip SPRAM (single-port RAM) which would be more than enough. However, the selection of packages is very restricted, the largest is a 48-pin QFN which leaves you with only 39 I/O pins.

@Michael the ICE40 Ultra and UltraPlus FPGAs look very attractive for this: lots of memory and low price. Unfortunately I don’t think the design can squeeze into 39 I/Os. I’m estimating the I/O count will be somewhere in the low 40s. So close!

Could external SPI flash be fast enough to provide the ROM functionality?

If so, and the FPGA could also boot from the same flash without prior configuration, that might help with the manufacturing/reprogramming problem.

Hmm, interesting thought on SPI flash doubling as both ROM storage and FPGA configuration. I did look at SPI for ROM storage very briefly a few weeks ago, and concluded it didn’t look promising, but I don’t remember the details offhand. Like the FPGA UFM, I think it has a lot of communication overhead, and may also require reading a full page at a time. If it could be clocked fast enough, it might still work.

After some reflection, I will probably keep things simple and just use an FPGA with more memory, or else add an external parallel ROM. The other approaches seem a bit “too clever”.

This reminds me of a port of your PlusToo code to Pepino LX9 with 1 MB of SRAM. I put the 128 MB of ROM code after the bitfile in flash and executed the code directly from the flash. Details at https://saanlima.com/forum/viewtopic.php?f=18&t=1446 and https://www.saanlima.com/pepino/index.php?title=Pepino_PlusToo.

How well does the ROM code compress? Perhaps a variant of idea #2 would be to store the ROM data using some kind of simple (RLE?) compression and decompress bank on bank switch?

It would probably compress well, but with a substitution for common byte values, rather than with RLE. It’s 6502 machine code, so most of the opcode bytes are one of a dozen-or-so common opcodes, and the operands have some very common values too (like zero).

I’d have to concur w/ Vincent. Since these are custom products (with target customers who are a bit odd to begin with), selecting parts that might cost a few $ more, but would significantly cut down on your implementation time (which has value in and of itself), would allow you to finish the product with a slightly higher parts cost, that you would pass along to us consumers, who would pay it, as ‘rolling our own’ would be INFINITELY more expensive.