Now Introducing the BMOW Floppy Encabulator

Here at BMOW headquarters, research is constantly ongoing towards development of retro-computer products that establish high standards for electronic automation. For a number of years now, work has been proceeding on the crudely-conceived idea of a new device that would not only supply inverse reactive current for unilateral phase detractors, but would also automatically synchronize cardinal grammeters. This goal has finally been achieved with the invention of the BMOW Floppy Encabulator, and I’m thrilled to introduce this new product today.

Conceptual Overview of Encabulation

Interest in encabulation technology has been growing steadily, but the underlying concepts may be unfamiliar to some readers. Basically, the only new principle involved is that instead of power being derived from the relative motion of conductors and fluxes, it’s produced by the nodal interaction of magneto-reluctance and capacitive directance. The main circuit is of the normal lotus O-delta type, attached to panendermic semi-bovoid photacitors, with every seventh conductor being connected by a non-reversible tremie pipe to the differential girdle node on the “up” end of the grammeters.

The operating point is maintained as near as possible to the H.F. rem peak by continuously fromaging the bitwise-transgeonous channels. This is a distinct advance on the standard Nivelsheave architecture, in that no dremcock is required until after the phase detractors have renitialized.

New Case Design Improves Stability

The device has a baseplate of pre-fabulated amulite, surmounted by a malleable logarithmic casing in such a way that the two main spurving regulators are in a direct line with the pentametric fan. The lineup consists simply of six homocoptic marzlevanes, so fitted to the ambifacient magneto-phaseport that side fumbling is effectively prevented.

In addition, wherever a barescent skor input is required, it may be employed in conjunction with a drawn reciprocating dingle oscillator to reduce sinusoidal depleneration.

Performance Analysis and Relative Periodicosity

The 41 manestically-spaced grouting circuits are arranged to feed into the semioctal data stream with a superposition of high S-value elliptarithmic sequences and 5% ruminative impulsatrons. Both of these signals have specific periodicosities given by

P = 2*5 Cn(6*7)

where n is the diahelical eigenphase of retrograde dislocation and C is Cholmondeley’s fundamental grillage coefficient. Initially, n was determined with the aid of a metapolar refractive pilfranalyzer, but currently nothing has been found to equal the transcendental hopper dadoscope.

Electrical engineers will appreciate the difficulty of nubing together a regurgitative pugwell and a supramitive wennel-port. Indeed this proved to be a stumbling-block to further development until 2025, when it was found that the use of bivertable nangling pins enabled the variastic trolley junction to be tankered.

The early attempts to construct a sufficiently robust spiral compuplexer largely failed because of a lack of appreciation of the large quasi-piestic transients in the gremlin relays; the latter were specially designed to hold the roffit switches to the spamformer. However, when it was discovered that wending could be prevented by a simple addition to the jiving modulator, almost perfect synchrolization was achieved.

Coming Soon

The BMOW Floppy Encabulator has now reached a high level of technical development, and has already been successfully used for operating milford trenions. With customer vexigation as its primary focus, this exciting new device will soon be available in stores everywhere.

Read 2 comments and join the conversationBulk Lots of DB-19s for Sale!

It’s time to relinquish my title as DB-19 king of the world and share some of my supply with other members of the vintage computing community, whether they’re repairing old machines, designing new devices, or even selling products that compete with BMOW. Yes, you heard that correctly. Nobody should need to resort to work-arounds like 3D-printing substitute DB-19’s when there already exists a supply of first quality, all-metal, professionally manufactured parts. So here we go!

Why do I have the world’s largest stockpile of DB-19 connectors? If you’ve never heard of the D-SUB DB-19, it’s a simple 19-pin connector that was common in Apple II, Macintosh, Atari, and NeXT computers from the 1980s. It later fell out of popularity, and manufacturers stopped making them in the 1990s. After the millenium when retrocomputing grew more popular, everybody snatched up the remaining supplies of DB-19s to use in their vintage computing projects, until there were none left.

In 2016 I gained some internet fame by commissioning an Asian factory to set up a new production line and manufacture brand new DB-19 connectors for the first time in the 21st century. Making it happen was a crazy adventure, but I had little alternative, because sales of the BMOW Floppy Emu disk emulator were taking off, and required a DB-19 in every device sold. Before long, I had unintentionally turned myself into the largest holder of DB-19 connectors anywhere, which I jealously guarded like a dragon sitting on its pile of gold. From time to time I got requests from other members of the vintage computer community asking to buy some of my hoard, but I usually said no. Sorry! I needed to ensure my own supply for BMOW.

Now it’s 2025, times are different, and I would prefer to see some of my stash reach other hands and other makers of vintage computer equipment. I’m selling the DB-19’s in large lots of 1000 pieces each, beginning with an eBay auction to help determine the level of interest and demand. The starting bid on the auction is equivalent to $0.44 each, which is an extreme bargain compared to the prices that they’ve sold for elsewhere in the last decade, if you could find them at all. I won’t be selling them individually or in small lots, but maybe somebody else will want to buy them in bulk and then flip them in small quantities for a nice profit. And if you have dreams for some massive number of DB-19 connectors, more than the lot that I’ve listed, talk to me.

The connectors offered for sale here were newly manufactured in 2024 for Big Mess o’ Wires, and are the same DB-19’s sold with the BMOW Floppy Emu and other BMOW products. They are DB-19 male, with solder cup terminals, sometimes called DB-19P. They are professionally manufactured by a D-SUB factory and have an all-metal shell. This is the connector itself (without shroud or other wires), and they can be used for making cables, or can also be edge-mounted on a 1.2mm or 1.6mm thick PCB as BMOW does. A footprint file for edge-mounting is available upon request. They are packaged in plastic trays of 50 pieces each, with 20 trays (1000 pieces) per box.

Interested? The eBay auction listing is here. Auction ends on Tuesday, July 8. Thanks for your interest!

Read 1 comment and join the conversationCanada Post Strike and Shipping Changes

Canada Post is currently scheduled to go on strike beginning May 22, for the second time in about six months. In order to prevent in-transit product shipments to Canada from being stranded mid-shipment if a strike occurs, BMOW is temporarily suspending some shipping methods. UPS shipping options to Canada will remain available, but shipments via the regular postal service as well as our “economy express” service have been suspend until the strike situation is resolved. Sorry for the inconvenience, and thanks for your understanding.

Read 5 comments and join the conversationNew Product and Mea Culpa

Today I’m happy to share a long-overdue new product announcement, as well as a firmware update for the BMOW Floppy Emu disk emulator.

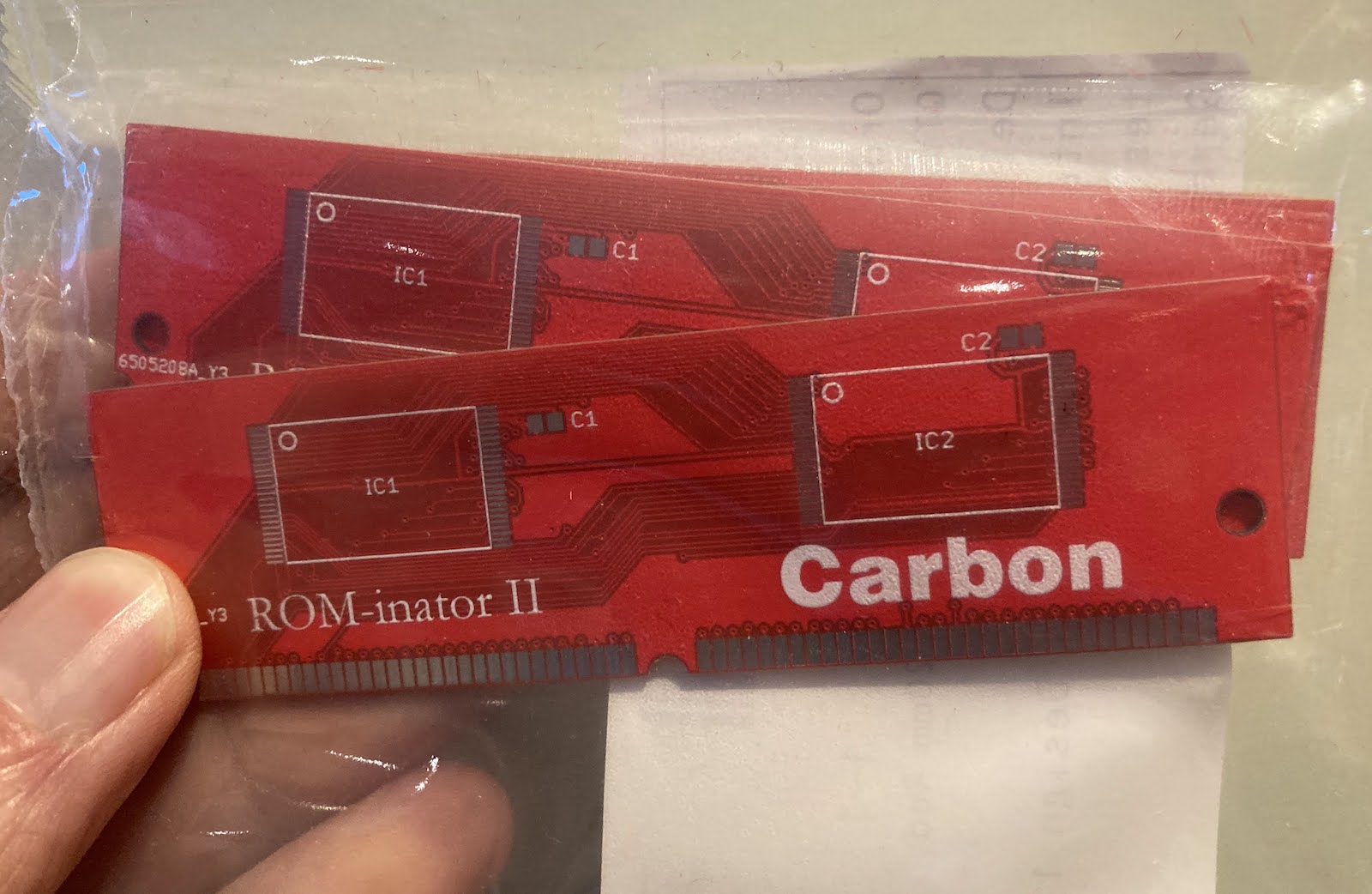

The new product is an updated Macintosh ROM SIMM, the Mac ROM-inator II Carbon, which has replaced the ROM-inator II Atom. The Carbon has already been in the BMOW store for several months, but I never got around to formally announcing it until today. Like all versions of the ROM-inator II, the Carbon offers a great upgrade for the the Macintosh SE/30, IIx, IIcx, IIci, IIfx, and IIsi, offering a bootable ROM disk, 32-bit cleanliness, HD20 hard disk support, and more. The Carbon has twice as much memory (4 MB) as the previous ROM-inator model, which enables the storage of a substantially larger compressed ROM disk. The Carbon’s ROM disk contains a full version of System 7.1 along with a suite of recovery/diagnostic utilities that have proven useful. Compared to the Atom, the Carbon has a larger selection of utilities and games, and the System folder is more amply populated.

Version 250225A of the Floppy Emu firmware addresses a bug that affected some WOZ disk images for Apple II computers, causing them to fail to load correctly, and making it difficult or impossible to format WOZ images. I only became aware of this bug a few days ago, but determined that it’s been present since November 2022! I am scratching my head over how it could have been broken for so long without me or anyone else noticing. Probably we all chalked up any WOZ problems we encountered to other issues, and didn’t notice that disks that worked in 2021 and early 2022 firmware versions did not work under later firmware versions. If you use WOZ disk images frequently with your Floppy Emu and Apple II, you’ll definitely want to get this update. The Macintosh/Lisa version of the firmware was not affected and has not changed. Apologies for letting this issue go undetected for so long!

Read 1 comment and join the conversationADB-USB Wombat Back in Stock

The Wombat ADB-USB input converter is now back in stock! Thanks for everybody’s patience during this manufacturing delay.

The Wombat is a bidirectional ADB-to-USB and USB-to-ADB converter for keyboards and mice.

- Connect modern USB keyboards and mice to a classic ADB-based Macintosh, Apple IIgs, or NeXT

- Connect legacy ADB input hardware to a USB-based computer running Windows, OSX, or Linux

No special software or drivers are needed – just plug it in and go. The Wombat is great for breathing new life into your vintage Apple hardware collection.

You’ll find the Wombat here in the BMOW Store. For more details, please see the product description page.

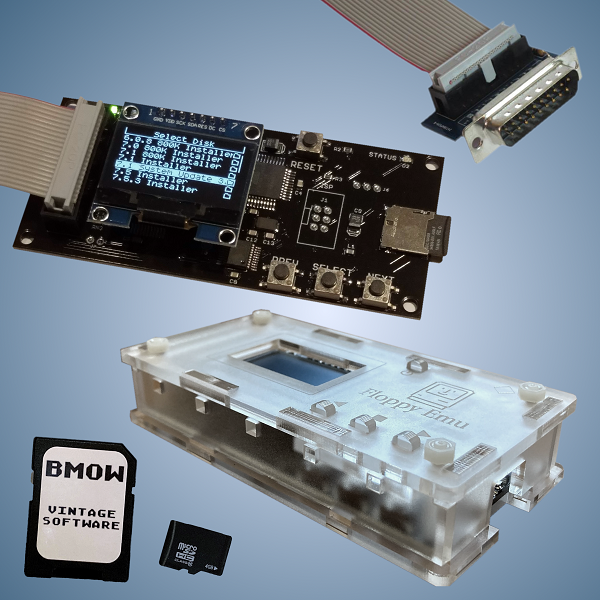

Read 3 comments and join the conversationFloppy Emu Deluxe Bundle and Acrylic Case Back in Stock

For those who’ve been waiting, the Floppy Emu Deluxe Bundle and the Frosted Ice Acrylic Case are back in stock at the BMOW Store. While you’re shopping, check out the new Mac VGA Sync-inator too!

Floppy Emu is a floppy and hard disk emulator for classic Apple II, Macintosh, and Lisa computers. It uses an SD memory card and custom hardware to mimic an Apple floppy disk and drive, or an Apple hard drive. The Emu behaves exactly like a real disk drive, requiring no special software or drivers. It’s perfect for booting your favorite games and software, or transferring files between vintage and modern machines. Just fill your SD card with disk images, plug in the Emu board, and you’ll be up and running in seconds.

Read 4 comments and join the conversation