Archive for January, 2018

Yellowstone: Cloning the Apple II Liron

FPGA-based disk control for Apple II is finally working! Six months ago, I began designing a universal disk controller card for the Apple II family. Apple made a bewildering number of different disk controller cards in the 1970s and 80s, and my hope was to replace the IWM chip (Integrated Wozniak Machine) and other assorted ICs typically found on the cards, and substitute a modern FPGA. With a little luck, that would make it possible to clone any vintage disk controller card – some of which are now rare and expensive. It would also enable a single card to function as many different disk controllers, simply by modifying the FPGA configuration. With the successful cloning of a Liron disk controller, the first major step towards those goals has been made.

Six months passed from the initial design until now, but it wasn’t exactly six months of continuous work. After a short spurt of activity last summer, the project sat collecting dust on my desk until I recently picked it up again. Sometimes it’s hard to find motivation!

Hello Liron

The “Liron” disk controller was introduced by Apple in 1985. More formally known as the Apple II UniDisk 3.5 Controller, it’s designed to work with a new generation of “smart” disk drives more sophisticated than the venerable Disk II 5.25 inch floppy drive. The smart disk port on the Liron is appropriately named the Smartport, and it can communicate with block-based storage devices such as the Unidisk 3.5 (an early 800K drive) and Smartport-based Apple II hard drives.

Why care about the Liron? The Apple IIc and Apple IIgs have integrated disk ports with built-in Smartport functionality, but for the earlier Apple II+ and IIe, the Liron is the only way to get a Smartport. For owners of the BMOW Floppy Emu disk emulator, the Liron card makes it possible to use the Floppy Emu as an external hard drive for the II+ and IIe. Unfortunately finding a Liron is difficult, and although they occasionally turn up on eBay, they’re quite expensive. That made cloning the Liron a logical first goal.

John Holmes was kind enough to lend me his Liron card for examination. Later Roger Shimada made the generous gift of a Liron and a Unidisk 3.5. I’m indebted to both of these kind gentlemen for their help.

The Liron contains an IWM chip, a 4K ROM, and a handful of 7400-series glue logic chips. It took a few hours to trace all the connections on the card and create a schematic. Except for the IWM, the exact functions of the other chips are all well known and relatively easy to implement in a hardware description language for the FPGA. Fortunately there’s a spec sheet for the IWM too, written by Woz himself, available if you search through dusty corners of the Internet. Based on that information, I was able to create an HDL model of the IWM for synthesis in the FPGA. It was a fairly big project, but I’d already done part of it back in 2011 for my Plus Too Mac replica.

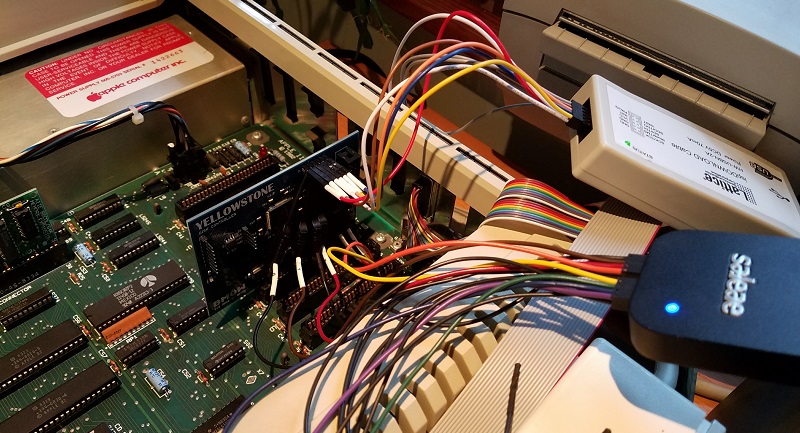

Yellowstone Prototype

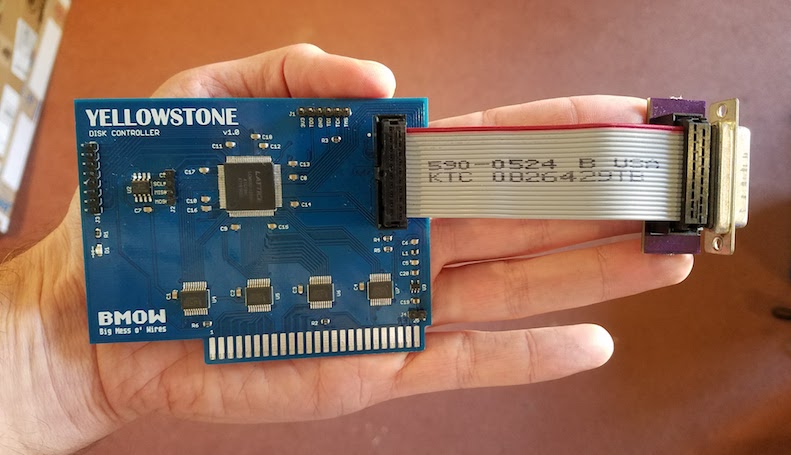

The first Yellowstone prototype was sketched out during a single hectic week. I’d never made an Apple II card before, but it’s just a standard thickness PCB with a specific shape and pattern of edge connectors. The core of the Yellowstone board is a Lattice MachXO2 FPGA, specifically the LCMXO2-1200HC. This 100-pin chip has 1280 LUTs for implementing logic, and 8 KB of embedded block RAM to serve as the boot ROM or for other functions. It also has some nice features like a built-in PLL oscillator and integrated programmable pull-up and pull-down resistors. Unlike some FPGAs, the MachXO2 family has built-in flash memory to store the FPGA configuration, so it doesn’t need to be reloaded from an external source at power-up. The FPGA can be programmed through a JTAG header on the card.

Because the FPGA’s maximum supported I/O voltage is 3.3V, but the Apple II has a 5V bus, some level conversion is needed. I used four 74LVC245 chips as bus drivers. These chips operate at 3.3V but are fully 5V tolerant, and the Apple II happily accepts their 3.3V output as a valid logic “high”. One of the chips operates bidirectionally on the data bus, and the others handle the unidirectional address bus and control signals.

The prototype card also has a 2 MB serial EEPROM. I’m not exactly sure how this will be used, but I’m hoping to find a way to load disk images from the EEPROM as well as load disks from a real drive. 2 MB is enough to store 14 disk images of 5.25 inch disks, or a single larger disk image. It’s not central to the design, but if it works it would be exciting.

To make the physical connection to an external disk drive, I attached a short cable to another custom PCB with a DB19-F connector. The female version of the DB19 isn’t quite as difficult to find as the male, but it’s not exactly common. If Yellowstone eventually becomes a product and sells in any appreciable volume, obtaining sufficient supplies of the DB19-F will likely be a problem.

Putting it All Together

After months of procrastination, and a long digression into what proved to be a faulty JTAG programmer, I was finally ready to put Yellowstone to the test. After a few quick fixes, it worked right away! I was very surprised, considering that the complex IWM model for the FPGA was developed without any iterative testing or validation. I got lucky this time.

Here’s Yellowstone, booting an Apple IIe from a 6 MB hard disk image using a Floppy Emu Model B in Smartport mode:

And here’s Yellowstone again, booting an 800K ProDOS master disk from an Apple Unidisk 3.5 drive:

What’s Next?

I’ve made it this far – phew! Next, there are lots of little things to fix on the card. Some parts are labeled incorrectly, it’s slightly too wide, some extra resistors and buffers are probably needed for safety, etc. Addressing all those items will keep me busy for a while.

Second, I’d like to investigate cloning other types of Apple II disk controllers. The Disk II controller card should be fairly easy to clone – or the Disk 5.25 controller, which is essentially the same card with a different physical connector. I’m about 90% sure I can make that work. I would love to clone the Apple II 3.5 Disk Controller too (aka the Superdrive controller), but that would be a much larger effort and I’m not certain it’s possible. I believe the real Superdrive controller contains an independent 6502 CPU and is quite complex.

In theory the Yellowstone card could also implement other non-disk functions, although it might require a different physical connector to make use of them. A serial card maybe? Some kind of networking? A coprocessor?

The elephant in the room is the question of Yellowstone’s ultimate goal. Is it a hobby project, or a product? If a product, how much demand really exists for something like this? How would the demand change, depending on what kinds of other disk controllers I’m ultimately able to clone? And how would Yellowstone buyers update the FPGA with new firmware for new clones and bug fixes? I probably can’t assume that every customer owns a JTAG programmer and has the tools and skills to use it. But I’m reluctant to add a USB interface or microcontroller that’s used solely for JTAG/firmware updates and is dead weight otherwise. I’m still waiting for a great solution to hit me.

I’m happy the Apple II bus interface is so easy to understand and implement. Thanks to Woz for that. With just a ROM and a bit of glue logic (or their equivalents in FPGA), you can do all sorts of creative things. With today’s computers being such closed systems, I’m glad we still have antiques like the Apple II to provide an outlet for my electronics tinkering.

Read 62 comments and join the conversationNo Device Connected

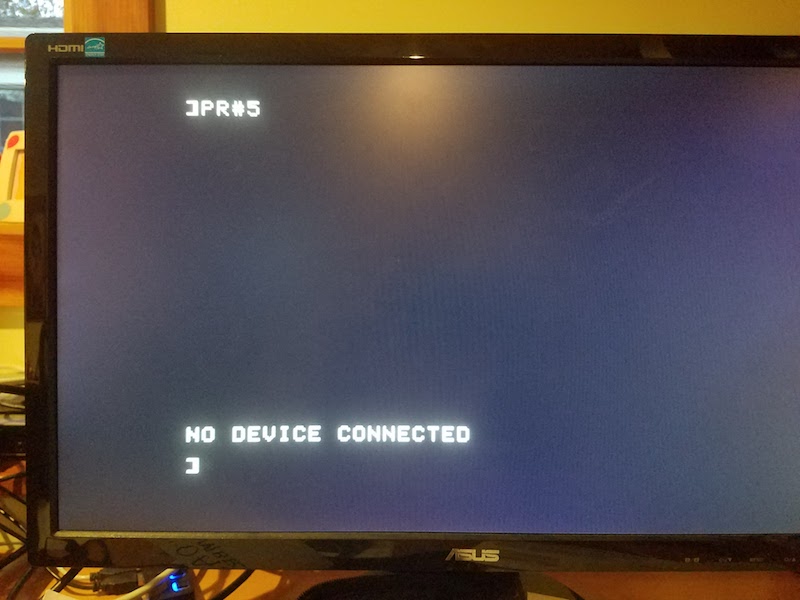

Finally, the first real progress on the Yellowstone disk controller since last summer! Sometimes an error is a good thing. It doesn’t look like much yet, but this error demonstrates that the Yellowstone card is correctly decoding address references to its slot, and is serving up data from a simulated ROM inside the FPGA. In this case the ROM data is the firmware from a stock Liron card. The Apple II’s 6502 CPU runs the firmware, which tries to use the FPGA’s soft-IWM to find a Smartport-capable disk drive. It finds none, and prints an error message. Hot stuff.

An error message is much better than having PR#5 crash the computer. That’s what happened on the many previous iterations of this test.

The next step is to stick a logic analyzer on the card’s disk interface, and see if the I/O lines are moving as expected. If that works, I’ll connect a disk drive and cross my fingers.

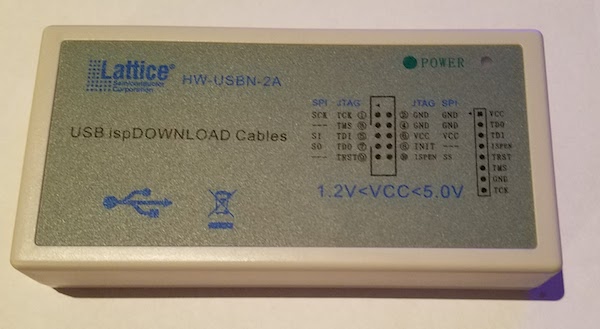

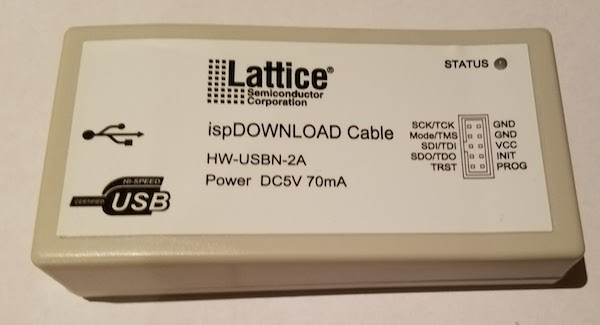

I also received another Lattice clone JTAG programmer in the mail today. This one worked on the first try, and the power/status LED works too! After a week of fiddling with my first Lattice clone, and reworking its PCB to replace the 74LS244 with a 74HC244, I finally got that one working as well. Fixing the first JTAG programmer was an interesting digression, but not really how I’d hoped to spend my time. If you’re shopping for a Lattice clone JTAG programmer, choose the one that looks like this:

not this:

Time for a celebratory beer.

Read 3 comments and join the conversationQuest For a Simple JTAG SVF Player

Where are all the cheap and simple third-party JTAG programmers? My work on the Yellowstone disk controller was sidetracked by issues with the Lattice-clone JTAG programmer, and discussion about ways that future Yellowstone customers might do JTAG programming. Eventually I realized that any generic JTAG hardware can program Yellowstone’s Lattice FPGA using a standard SVF file generated by the Lattice software. But what JTAG programming hardware to use, and what software to control it?

The device I’m imagining needs to play SVF files on a JTAG interface and verify the result. That’s all. It doesn’t need to handle JTAG debugging, ISP programming, SPI, or anything else. It should have easy cross-platform software to control the device: a basic GUI where the user can select an SVF file and click a button, then see progress info and a final good/bad result. Since JTAG is a fairly simple serial interface, this shouldn’t be much more difficult than building something like a USB-to-serial adapter, along with software to control it, which could leverage something like lib(X)SVF for SVF parsing. I confidently assumed there would be many such devices available for sale.

I was wrong. My search found nothing that really fit the requirement of cheap and easy, though a few options came close. Here’s a summary of what I found. If I’ve overlooked something obvious, please leave me a note in the comments.

Bus Blaster – A $35 open source JTAG tool based on the FT2232H. The hardware fits the bill, but the software does not. The Bus Blaster seems mainly intended to be used with UrJTAG or OpenOCD software, which are both unfortunately the opposite of user-friendly. From what I can tell, neither one has an official binary distribution and must be compiled from source code. From there it’s a somewhat daunting sequence of command line work to get things done. Surely there’s a simpler option if all that’s needed is an SVF player?

Flash Cat – A $30 commercial JTAG device that also does SPI, I2C, and serial. It’s not clear what IC it uses. This might almost work, and the software looks promising, but it’s Windows-only.

TinyFPGA Programmer – A $9 open source programmer for the TinyFPGA product line, and based on a PIC microcontroller. This is the best solution I’ve found so far, and the software is simple and cross-platform. But the hardware is specifically designed for the TinyFPGA boards, and so it makes assumptions about voltage levels and JTAG pinouts, and may have other limitations that prevent it from being a general-purpose programmer. The software is a python module and the GUI is Tk. Beggars can’t be choosers, especially for only $9, but a native control program for Mac and Windows would be preferred.

Those were the only decent commercial options I could find. Everything else was either a platform-specific tool, or a more complex and expensive JTAG debugger like the Segger J-Link, or somebody’s one-off hobby project.

Am I blind, and overlooking an obvious solution? If not, why doesn’t something like this already exist? Is it more complicated than I imagine? Or is there simply no demand for it? It sure looks like you could build a device like this using any simple microcontroller or USB-to-serial converter. Then spend a little time creating native apps for Mac and Windows, and get everything lined up regarding necessary drivers and software signatures, and you’d have a very desirable product. I have half a mind to drop everything and go do this myself.

Read 9 comments and join the conversationWhat’s on the BMOW Bookshelf

Over the decades, I’ve pared my collection of books down to just four shelves – only about 100 volumes from a lifetime of reading and studying. Because who needs physical books in an age where everything is available online? The few books that remain in my collection have survived a relentless process of repeated culling, until only the most meaningful and valuable ones remain. Want to take a peek? Here are some selections from the shelves.

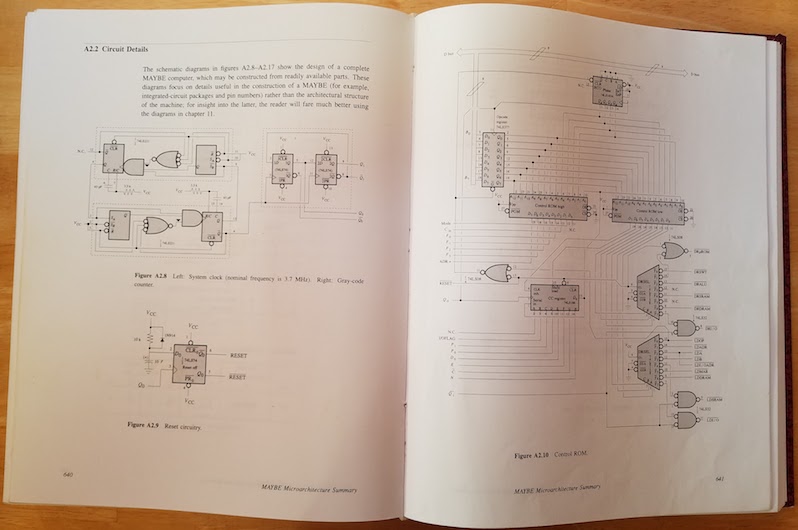

Computation Structures, by Ward and Halstead. This was my original digital electronics textbook, covering everything from the transistor to CPU design, and is the only one of my bazillion university textbooks to have survived the cull. This book and MIT’s 6.004 class are what first got me excited about building with electronics. The book is a bit dated now: for something more up-to-date, maybe try Horowitz and Hill instead.

At the end of Computation Structures are schematics and control software for a DIY 8-bit computer called the MAYBE, from which I liberally borrowed ideas for my BMOW 1 computer. I built the MAYBE on a giant breadboard in a suitcase, chip by chip and wire by wire, over a semester-long class.

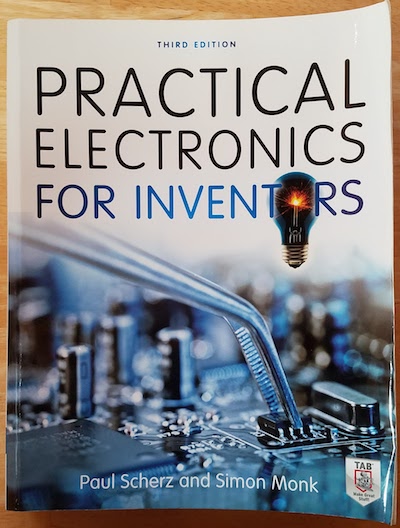

Practical Electronics for Inventors, by Scherz and Monk. This is a great reference for all those electronics details I should have learned, but didn’t. It puts an emphasis on practical engineering applications, with just enough theory to make sense of it all. When I find myself staring at some Forrest Mims schematic or other inscrutable circuit full of bipolar transistors and passives with unknown purpose, this book is a helpful deciphering aid.

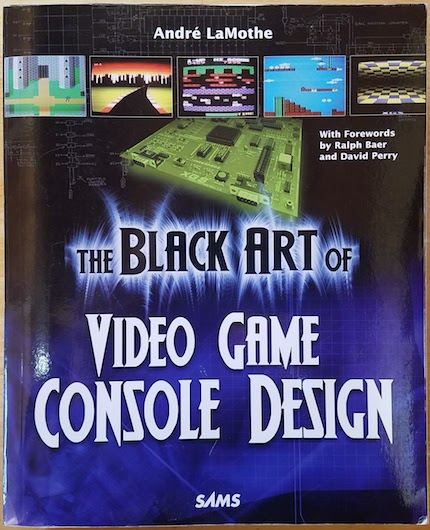

The Black Art of Video Game Console Design, by André LaMothe. Ignore the slightly misleading title. This book is a great how-to guide for designing and building digital electronics projects of all types, with a particular emphasis on microcontroller projects. It covers analog and digital theory, circuit analysis, prototyping techniques, computer architecture, video synthesis, relevant tools, and more. If I could only have a single reference book for all my BMOW projects, this would probably be it.

FPGA Prototyping with Verilog Examples, by Pong P. Chu. For anyone that’s interested in building things with FPGAs or CPLDs, but finds it hard to get past the phase of blinking an LED, check out this book. Verilog can be a difficult language for someone coming from procedural languages like C++. It looks superficially similar, but is actually radically different, with every line of “code” running in parallel. This book goes beyond the Hello World stuff, and provides well-explained examples like a digital stopwatch, a soft UART, PS/2 keyboard IO, and a memory controller. There’s also a VHDL version of this book by the same author, if you prefer that to Verilog.

Macintosh Repair and Upgrade Secrets, by Larry Pina. This book is the bible for vintage Mac collectors. It’s long out of print, but I managed to snag an old copy from eBay. Does you Mac Plus make a “flupping” sound and refuse to turn on? Got an original Mac 128K with floppy drive problems, or video that’s reduced to a single horizontal line? Larry explains how to fix it, pointing to exactly which components need testing and replacement. These were the good old days when hackers could fix their busted motherboard by replacing transistor Q5 with a Radio Shack soldering iron, instead of making an appointment at the Genius Bar.

The New Apple II User’s Guide, by David Finnigan. Unlike Macintosh Repair and Upgrade Secrets, Finnigan’s Apple II bible was published in 2012 and is therefore relatively up to date. It covers all the common repairs for the Apple II family, as well as lots of reference material for collectors who may not have grown up with an Apple II, or forgotten what CALL -151 does. What’s better, it also discusses many of the challenges and options for using an Apple II computer in the 21st century. These include how to get your Apple II on the internet, solid state floppy disk alternatives, archiving tools, and the like.

Web Database Applications with PHP and MySQL. This book is essentially “how to build an interactive web site for dummies” using 2004 technology, so it’s dated now, but I still refer to it occasionally. Even for someone with no plans to build a web site, it’s a good introduction to the concepts of how web sites work under the hood. The book describes the ever-popular LAMP stack (Linux, Apache, MySQL, PHP) used to build the front-end and back-end of web applications. Using the skills learned from this book, augmented with more online learning, I’ve built about a dozen different web sites over the years.

Reversing: Secrets of Reverse Engineering, by Eldad Eilam. This is a fascinating look at how software programs are put together, and how they can be pulled apart and modified. I’m not aware of any other books like this one, which makes it that much more entertaining. Reverse engineering sometimes gets a bad reputation, and some people believe it’s only relevant for defeating copy-protection or other morally questionable purposes. The author introduces other uses, such as dissecting and analyzing malware, or understanding software that lacks source code and documentation. For anyone who enjoyed my post about what happens before main(), or my quest to create the smallest possible Windows executable, I recommend checking out this book.

Compute! Magazine, January 1985. This is the only remaining example of my once-mighty collection of computer magazines. There’s no special significance to this particular issue, other than that it was the only one to escape the recycling bin. It’s always amusing to browse. Loading software from cassette tape? Compuserve ads? And who didn’t love typing in those 20-page long BASIC program listings?

Flatland, by Edwin A. Abbott. This classic 1884 science fiction “romance of many dimensions” will blow your mind in just 92 pages. Imagine you’re a three-dimensional being attempting to explain things to inhabitants of Flatland, a 2D world. Imagine those Flatlanders attempting to explain their world to the miserable inhabitants of 1D Lineland. Now ponder the implications for our own three-dimensional existence: is it any more real than Flatland or Lineland? Where are the 4D visitors to Earth, and what might they look like? It’s a fantastic thought experiment, and the side-plot satire of Victorian society values is a hilarious bonus.

The Monopoly Companion, by Philip Orbanes. If you thought Monopoly was just a silly game for kids, you’re wrong. Back in my university days, my group of friends played many hundreds of Monopoly rounds, arguing strenuously over trading and strategy. A good friend was the Massachusetts champion one year, and represented the state at the National Monopoly Championships held in Atlantic City. The book describes general strategies like a preference for certain color groups (orange is best), and the importance of building to the three house level. We created a detailed software simulation accounting for all board squares, rents, cards, and dice roll probabilities, and ran it millions of times to determine the best strategy. Then we sent a letter to this book’s author, disputing some of his conclusions. God, what a bunch of nerds we were.

Your Money or Your Life, by Dominguez and Robin. We all spend a tremendous amount of time and energy in the attempt to accumulate money, sometimes without really considering why. Yes we need a safe place to live, food to eat, and other necessities – but what then? The money itself won’t bring happiness. Have we really thought about exactly what will bring happiness? How much of our life’s energy are we willing to trade away for those things? Having identified them, might there be other ways to get similar things that require less or no money? Can money even buy them at all? Your Money or Your Life helps guide readers towards a future where they may not be rich in dollars, but are rich in the things they value most.

Finding Your Own North Star, by Martha Beck. Where are we headed? Why? What’s important to our essential self? Like other self-help books or the time-honored What Color is Your Parachute, this book aims to help identify paths that are best-aligned with one’s values. It resonated with me in a way that other similar books didn’t. One clever exercise was to get everybody on your side, by intentionally redefining who “everyone” is. Another was an invitation to envision the best possible personal future imaginable, and map it out. The act of defining a goal already brings it closer.

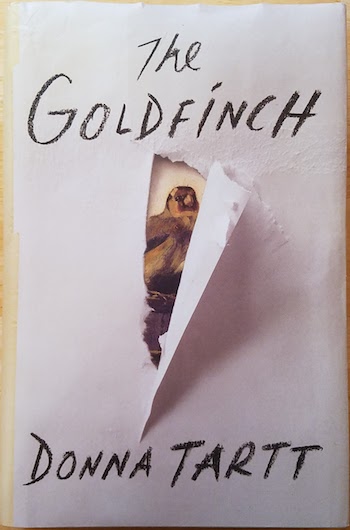

The Goldfinch, by Donna Tartt. A friend and I read this Pulitzer Prize winning novel at the same time. She thought it was just OK. I thought it was outstanding. It was one of those books that when you finish, you set it gently in your lap and just stare vacantly into space for a long while, trying to digest it all. Tartt’s prose is rich and evocative like a master painting, and her story of loss and disaffection is heartbreaking. But what really moved me was the portrayal of the awkward intimacy of adolescent male friendships, the things said and unsaid but understood, the struggle to carry those relationships into adulthood. I felt Tartt had snooped inside my mind and extracted feelings I didn’t even know were there.

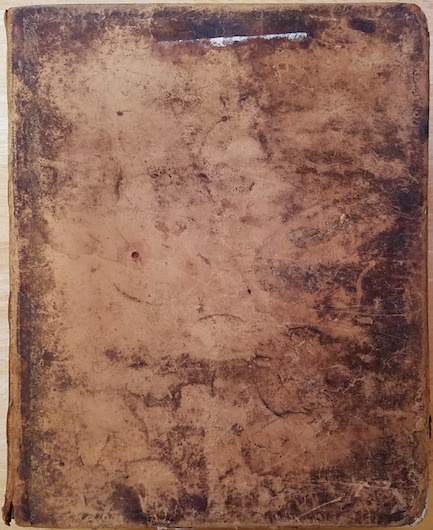

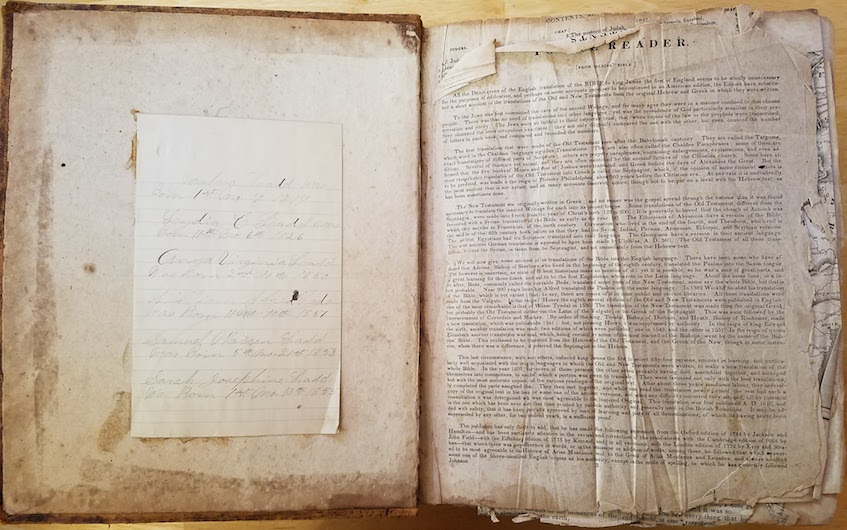

A family bible from 1817. Not many artifacts survived the past two centuries with my family, but this one did. It’s a huge volume with an imposing leather cover, and on the inside leaf are inscribed the births and deaths of generations.

“Joshua Ladd was born 1st mo. 7 1817”. That’s January 7 to us, so Joshua just had his 201st birthday recently. He was my great-great-great-great-grandfather, a farmer originally from Virginia who at age 14 moved to Ohio with his mother and siblings after the death of their father. Ohio had just become a state, and the family moved in search of new opportunities. Joshua opened a grocery and supply store in the town of Westville, near Damascus OH. I still have the wedding gloves of Joshua’s daughter Sarah tucked safely in a box. Any Ladds in your family tree? Maybe we’re related.

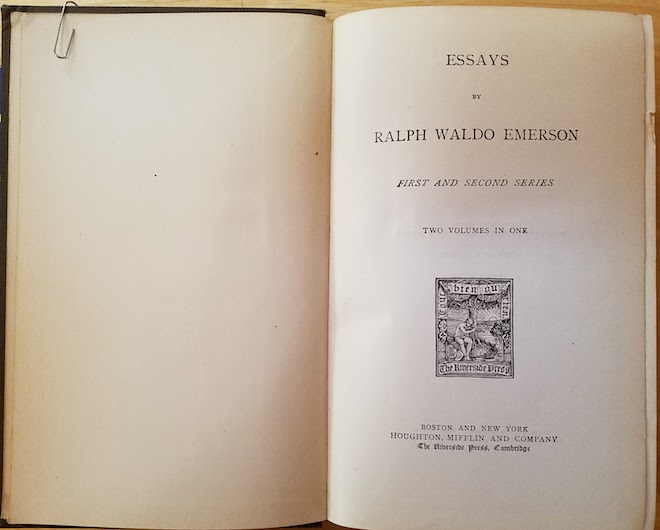

Essays by Ralph Waldo Emerson. This particular volume is from 1883, and was passed down through generations to my father and then me. Sometimes I think those Transcendentalist authors like Emerson, Longfellow, and Thoreau had everything figured out in 1870, and we haven’t accomplished much since. “When you were born you were crying and everyone else was smiling. Live your life so at the end, you’re the one who is smiling and everyone else is crying.”

Read 3 comments and join the conversationYellowstone JTAG Debugging

After a month of inactivity, I finally returned to my unfinished Yellowstone disk controller project to investigate the JTAG programming problems. Yellowstone is an FPGA-based disk controller card for the Apple II family, that aims to emulate a Liron disk controller or other models of vintage disk controller. It’s still a work in progress.

Last month I discovered some JTAG problems. With the Yellowstone card naked on my desk, and powered from an external 5V supply, JTAG programming works fine. I can program the FPGA to blink an on-board LED. And when I insert the already-programmed card into my Apple IIe and power it from the slot, it works – the LED blinks. But if I try to do JTAG programming while the card is inserted in the IIe, it always fails with a communication error. I’ve run through several theories why:

- It might be some kind of noise or poor signal integrity on the JTAG traces. But the traces are quite short and don’t cross any other signal traces that might carry interfering signals.

- Maybe I have power problems, and the IIe’s 5V supply is drooping briefly when I try to program the FPGA via JTAG. But I measured the 5V and 3.3V supply voltages during JTAG programming, and they look fine.

- There might be a ground loop, due to the Apple IIe and JTAG programming having different ground potentials. But I measured the difference in grounds, and it’s only 4.3 millivolts.

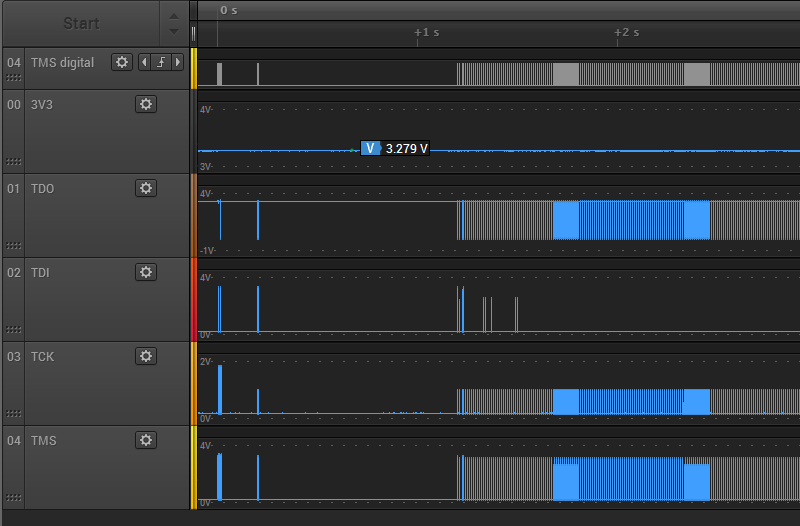

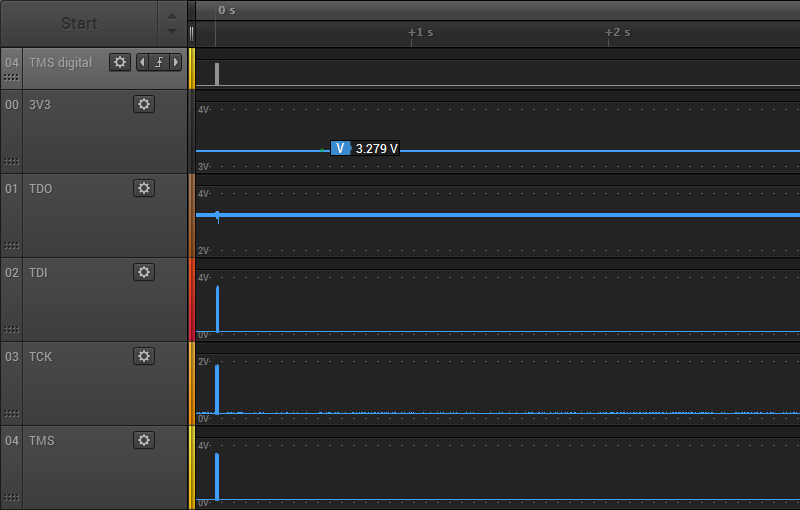

To help solve this mystery, I used the analog mode of my Saleae Pro 8 Logic analyzer. In analog mode, it functions like a simple 8-channel 12.5 Ms/sec oscilloscope. I recorded the 3.3V supply for the FPGA, as well as all the JTAG signals. First, here’s what the first three seconds of JTAG traffic look like when programmed externally:

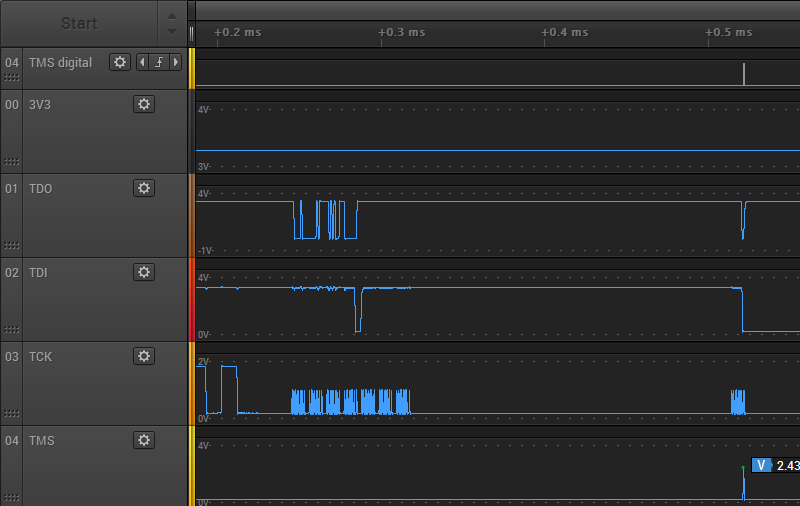

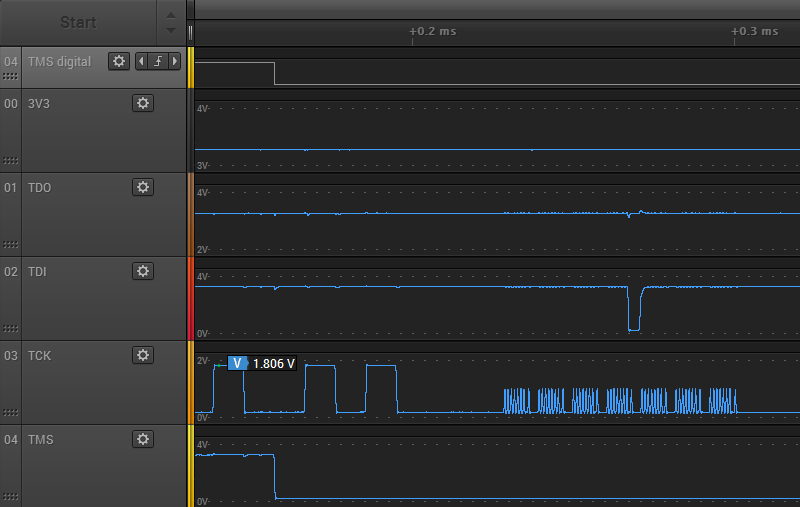

There’s about 1 second of preamble communication, and the rest is the FPGA configuration data arriving at high speed. The 3.3V supply for the FPGA remains at about 3.28V through the whole process. The JTAG signals TMS, TDI, and TDO span the voltage range from 0.17V to 3.2V, which seems fine. But the the TCK signal never goes higher than 1.86V. Uh oh, what’s happening there? Let’s zoom in a little:

Zooming in, things are even worse than they appeared initially. TCK never climbs above 1.86V, but many TCK pulses only get half that high, stopping at 0.97V. TMS and TDI show some runts too. Zooming in even further on one of the problem areas:

Here you can see a couple of the 1.86V TCK pulses, followed by a whole mess of the runtier 0.97V pulses. Ugh. These should all be using the full range 0 to 3.3V, or something close to it. With a clock signal this bad, it’s amazing the JTAG programming still works.

Do these graphs really reflect what’s happening? I’m a little suspicious that I’m running into limitations of the Saleae Pro 8’s analog mode. At 12.5 Ms/sec, it’s taking one analog sample every 0.08 microseconds or 80 nanoseconds. That’s pretty poor as scopes go, but the period of the JTAG clock is slow: about 1 microsecond (1 MHz operation). There should be 12.5 samples per clock period, more than enough to get a decent reading for the min and max voltage of each clock period. Therefore I think the graphs are accurate.

My conclusion is that although external JTAG program succeeds, the JTAG signals look terrible. The fact that JTAG programming fails when the card is in the Apple IIe slot likely has little to do with the IIe, and everything to do with some other basic signal quality problem.

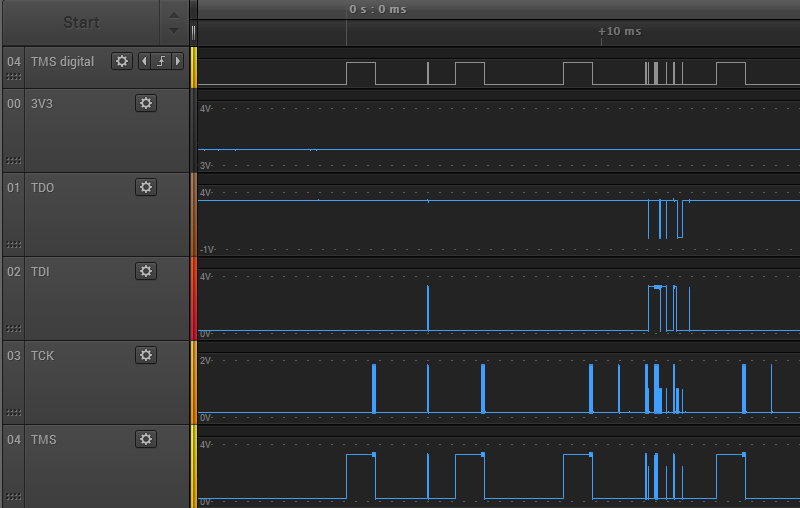

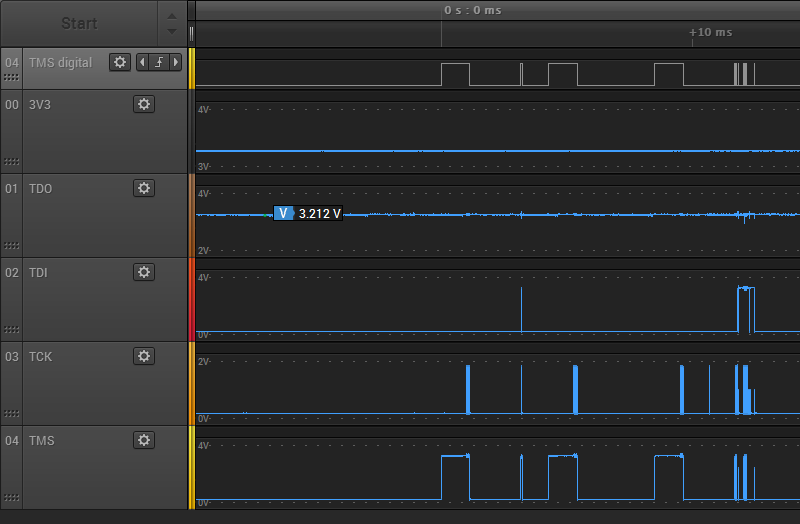

Next I put the Yellowstone card into the Apple IIe, and repeated my test. Here’s the first three seconds of JTAG traffic again:

There’s a short bit of preamble communication, then nothing. Something must go wrong at the beginning, and the rest of the communication is aborted. The voltage levels all look about the same as when programming externally. The 3.3V supply is about 3.28V, TCK never goes higher than 1.86V, but the other JTAG signals use the full voltage range. Zooming in, we observe the same extra-runty TCK pulses as with external JTAG programming:

It’s not obvious to me why external JTAG programming succeeds, but programming in the Apple IIe slot fails. Both cases look equally bad. The only real difference I noticed is the TDO signal. In the case of external programming, the TDO high voltage is very steady at about 3.268 volts, and never varies by more than 0.01V. It also drops low at many points during the JTAG communication. But in the case of in-slot programming, the TDO signal is always high and the voltage is noisier. It’s a subtle difference, but you can see the minor noise here:

The TDO high voltage ranges from 3.187V to 3.314V, so it’s about 10x noisier than during external programming. It’s still within an acceptable range though, so maybe this isn’t important.

Finding the Culprit

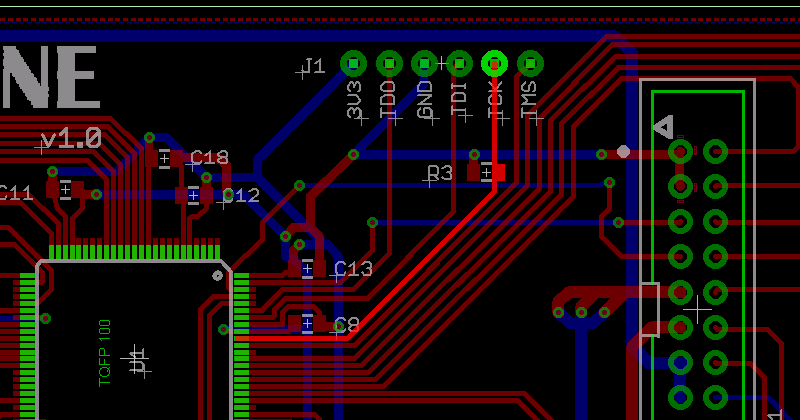

Now that I know I have poor quality JTAG signals, where do I look for the cause? Poor quality JTAG programmer? Bad PCB design? Here’s a section of the PCB, showing the path of the JTAG signals from the connector to the FPGA:

There’s not much opportunity for interference. The only PCB tracks that are crossed by the JTAG signals are voltage supplies and the disk I/O signals, which were unconnected during this test.

The prime suspect is R3, a 4.7K pulldown resistor on TCK. This was recommended by Lattice, as a precaution to prevent spurious TCK pulses causing unwanted JTAG activity when no JTAG programmer is present. Lattice technote TN1208 for the MachXO2 family says on page 12-2 “TCK: Recommended 4.7kOhm pull down.” The other JTAG signals discussed here all have internal pull-ups. 4.7K isn’t much, but maybe the JTAG programmer has an anemic drive strength and is unable to drive TCK fully to 3.3V with the pulldown present? I could try removing R3, but that wouldn’t explain why there are also some runt pulses seen on TMS and TDI. I double-checked to confirm I didn’t accidentally use the wrong value resistor for R3, but no: it’s 4.7K as intended. I also measured the resistance between TCK and GND, to see if there’s some other unintended low-resistance path to GND that’s screwing up everything, but it measured 4.7K exactly.

Read 31 comments and join the conversationMeltdown and Spectre Vulnerabilities Explained

This week brought some fascinating news for CPU nerds: the revelation of security vulnerabilities in the basic hardware architecture of many modern processors. The Meltdown and Spectre vulnerabilities affect virtually all modern computers, according to a sensationalist headline at The Register which called them the “worst ever” CPU bugs. Unlike bugs in a specific software program or operating system that can be fixed with a patch, these are fundamental flaws in the very design of the CPU.

What are these vulnerabilities exactly, how do they work, and how are Meltdown and Spectre different from each other? I must have read twenty different news stories without finding a clear answer. The best I could get was that these vulnerabilities somehow involve the CPU’s use of speculative execution: an optimization trick that executes code before it’s known whether the code should really be executed, discarding the results if it’s later determined that the code wasn’t needed. But as a guy who designs custom CPUs as a hobby, I needed a better answer than that. So I started poking beyond the news headlines into the gory tech details.

What follows below is my attempt to explain Meltdown and Spectre to myself, and by extension to readers of this blog. It assumes readers already have some basic knowledge of concepts like CPU internals, caches, and operating systems. But moreso than normal for this blog, I’ll be discussing details that I don’t fully understand myself, and my explanations may be flawed. If you find an error or omission, kindly post a comment and let me know.

Speculative Execution + Caching = Vulnerability

Both Meltdown and Spectre exploit the fact that speculatively executed code can modify the CPU cache. Even if the code is never “really” executed, meaning that it never modifies CPU registers or memory or other processor state, the cache effects of the speculatively executed code can be observed by cleverly-constructed code that follows it, by testing what is and isn’t cached. Consider this example, taken from Google’s Project Zero Blog:

struct array {

unsigned long length;

unsigned char data[];

};

struct array *arr1 = ...;

unsigned long untrusted_offset_from_caller = ...;

if (untrusted_offset_from_caller < arr1->length) {

unsigned char value = arr1->data[untrusted_offset_from_caller];

...

}

If arr1->length is not presently in the cache, the CPU won’t immediately know whether the if clause will evaluate true or false. If it guesses true, then the if body will execute speculatively while arr1->length is fetched from main memory. Speculative execution will cause arr1->data[untrusted_offset_from_caller] to be loaded from main memory into the cache. This behavior can be leveraged in several different ways (see below) to gain information about protected regions of memory that are supposed to be private: memory owned by other processes or the kernel. The ability to observe protected memory makes it possible to read passwords, Bitcoin keys, emails, or other sensitive information.

Spectre: Speculative Execution in Branch Prediction

The Spectre vulnerability requires getting the victim (the kernel or another process) to run specially-constructed code, which then leaks information through the cache effects of speculative execution. Consider an expanded version of the previous example.

struct array {

struct array {

unsigned long length;

unsigned char data[];

};

struct array *arr1 = ...; /* small array */

struct array *arr2 = ...; /* array of size 0x400 */

unsigned long untrusted_offset_from_caller = ...;

if (untrusted_offset_from_caller < arr1->length) {

unsigned char value = arr1->data[untrusted_offset_from_caller];

unsigned long index2 = ((value&1)*0x100)+0x200;

if (index2 < arr2->length) {

unsigned char value2 = arr2->data[index2];

}

}

If arr1->length is not currently in the cache, speculative execution will continue inside the body of the if clause. Either arr2->data[0x200] or arr2->data[0x300] will be fetched from main memory and cached, depending on the least significant bit of arr1->data[untrusted_offset_from_caller]. After the speculative execution has ended, the attacking user mode code can measure how much time is required to load arr2->data[0x200] and arr2->data[0x300]. Whichever one was cached will load faster, revealing whether the LSB of arr1->data[untrusted_offset_from_caller] is 0 or 1. By repeating this process with other bit masks, the attacker can eventually read all of arr1->data[untrusted_offset_from_caller]. And by the choice of untrusted_offset_from_caller, the attacker can read any memory location.

That’s the general idea. Some implementation details and optimization methods are described in the Project Zero blog. The blog also describes another Spectre variant exploiting speculative execution through indirect branches, which I didn’t examine.

How can a user process get the kernel to run this kind of specially-constructed code? It turns out that the Linux kernel has a feature called eBPF that’s designed for this exact purpose, presumably to allow for device drivers or socket filters or other snippets of user-provided code that need to run in the kernel. I’m assuming Windows and Mac OS have something similar. Since running arbitrary user-provided code in the kernel would be a huge security vulnerability itself, eBPF actually runs the code in an interpreter or a JIT engine. But that’s enough to exploit this vulnerability.

Note: after writing this post, I found a second explanation of Spectre from a group of academic researchers working independently from Google Project Zero. Their paper describes Spectre slightly differently, and discusses attacking other processes rather than the kernel. It also includes a proof of concept Javascript attack, in which a malicious bit of Javascript is able to read private memory from the web browser process. Relying on the fact that Javascript is typically JIT compiled to native code, and using debug tools to examine the native output, the authors were able to iteratively tweak the Javascript source code until it produced native code containing an exploitable conditional branch of the kind shown above.

Spectre affects essentially all modern CPUs, given the proper conditions: Intel, AMD, ARM, etc.

Meltdown: Exploiting Out of Order Execution

Meltdown is similar to Spectre, in that they both leak information through the cache from instructions that were never “really” executed. However, with Meltdown there’s no branching involved. The attack relies on the way modern CPUs optimize performance by employing out of order instruction execution. Due to the availability of CPU execution units and dependent data, instructions are sometimes executed in a different order than they appear in a program, but they are retired (registers and state are updated) in order. The fact that they were executed out of order should be invisible to the program, but Meltdown shows that’s not always true. The details are described at cyber.wtf and in a separate academic paper.

When instructions are executed out of order, but aren’t yet retired, their results exist in a kind of limbo that’s very similar to speculatively executed code from branch prediction. However, it’s not clear whether this should properly be called speculative execution. The Meltdown paper refers to these as “transient instructions”, which seems like a good term.

Consider this code:

mov rax, [someKernelAddress] and rax, 1 mov rbx,[rax+someUserModeAddress]

The first instruction should cause an exception if executed from a user mode process, because it’s an attempt to access kernel memory. However, at least on Intel architectures, it appears that the exception doesn’t occur until the instruction is actually retired. If this code is executing as part of a transient instruction sequence, no exception will yet occur, the privilege violation (bypassing kernel memory protection) will be ignored, and the transient read will succeed. The following transient instructions that use the read value will also succeed, and will affect the cache state in the same way as Spectre, creating a side-channel for leaking information about kernel memory.

Eventually, the first instruction will be retired and the exception will occur. The state changes related to the following instructions will be discarded, because those instructions were never really executed, but their cache side effects will remain. After an exception handler resolves the exception, further code can measure how long it takes to load from address someUserModeAddress vs someUserModeAddress+1, thereby inferring the LSB of someKernelAddress. Further iterations can read the other bits.

To ensure that the Meltdown code sequence executes as an out-of-order transient sequence, the cyber.wtf technique includes a long series of filler instructions ahead of it. These filler instructions all use a different execution unit than the units needed by the Meltdown sequence, and create a long chain of interdependent instructions. So the CPU must sequentially execute the filler instructions one at a time, but meanwhile it can also jump ahead and execute the Meltdown instructions out of order.

Note that “out of order” here refers to the Meltdown code being executed out of order relative to the filler instructions. The Meltdown instructions themselves are executed in order relative to each other, since each one is dependent on the previous one.

At this time, Meltdown appears to be limited to Intel CPUs only. It’s uncertain whether this is due to a fundamental difference in how Intel handles memory protection with respect to out-of-order execution, or is simply due to differences in the size of the reorder buffer between CPU vendors. In a short aside on Meltdown, the Spectre paper states “Meltdown exploits a privilege escalation vulnerability specific to Intel processors, due to which speculatively executed instructions can bypass memory protection.” However, the Meltdown paper reports that the authors were able to observe bypassing of memory protection during out of order execution on ARM and AMD processors too, but were unable to construct a working exploit.

Impact and Mitigation

Both of these vulnerabilities are very bad, enabling user mode code to read other protected memory. However, they’re both local exploits: the attacking code must be running on the machine being attacked, so an attacker must somehow get their code onto your machine first. For this reason, the greatest risk is probably to cloud computing environments, where processes from many different people are running on the same machine, supposedly isolated from one another thanks to memory protection. But there’s also a risk in any situation where one computer runs code received from another, even inside a VM or sandbox.

It’s not clear how Spectre can be fixed in software, because it relies on CPU features that are fundamental to all modern processors. In fact, I don’t think it can be fixed in software – at least not in any general way. One web site says, “as Spectre is not easy to fix, it will haunt us for a long time.” The only good news is that convincing the kernel to run an attacker’s special code with eBPF or other methods isn’t easy. And web browsers can be patched to protect against the sort of Javascript Spectre attacks described in the research paper. But other opportunities for Spectre attacks will remain. Future CPUs may contain hardware fixes for Spectre, but they’ll likely come with a complexity and performance penalty. Maybe cache lines will have to be treated as process-specific data instead of as shared resources.

Meltdown is the more serious of these two vulnerabilities, because it happens entirely in a single user space program and doesn’t require any special code in the victim process or kernel. In the real world, this makes it much easier for an attacker to exploit. Fortunately Meltdown can be fixed at the operating system level, but the fix carries a performance penalty that may be as high as 30%.

In the Meltdown sample code, readers may have wondered how an instruction like mov rax, [someKernelAddress] could possibly work in user mode code, even speculatively, since the kernel uses a different virtual to physical address mapping than the user mode process. It turns out that Linux (and I assume other operating systems too) maps the kernel’s memory into the address space of every user process, for performance reasons. By keeping the kernel permanently mapped, there’s no need to flush the TLB when switching between user and kernel space, and TLB entries for kernel space never need to be flushed. The processor’s MMU can normally be trusted to prevent user processes from accessing this kernel memory – except in the case we’ve just seen. The details are nicely explained in the description of a related technology named KAISER.

The “fix” is KPTI: kernel page table isolation. This ends the longstanding practice of mapping kernel memory into the address space of user processes. This ensures that mov rax, [someKernelAddress] won’t be able to reveal any information. However, it means that the TLB must be flushed every time there’s a switch between user and kernel code. If a user process makes frequent kernel calls, the constant TLB flushing will be expensive and have a significant negative performance impact.

The Future

Meltdown and Spectre are scary, not only because of what they can do themselves, but because they introduce a Pandora’s Box of new vulnerability types we’re sure to see more of in the future. We can no longer think about security analysis as something that gets applied to specific software programs or operating systems, examining the code and imagining it being executed instruction by instruction in an abstract environment. We must now consider the specific highly complex CPU (often not fully documented) that runs this software, and understand the many subtle ways in which the true hardware behavior differs from the instruction-by-instruction conceptual model.

The KAISER document mentions techniques like exploiting timing differences in fault handling, observing the behavior of prefetch instructions, and forcing faults using the Intel TSX (transactional memory) instructions. This will be a new frontier for most developers, forcing them to peel back a layer of abstraction when evaluating future computer security issues. The world just got a lot more complicated.

Read 4 comments and join the conversation